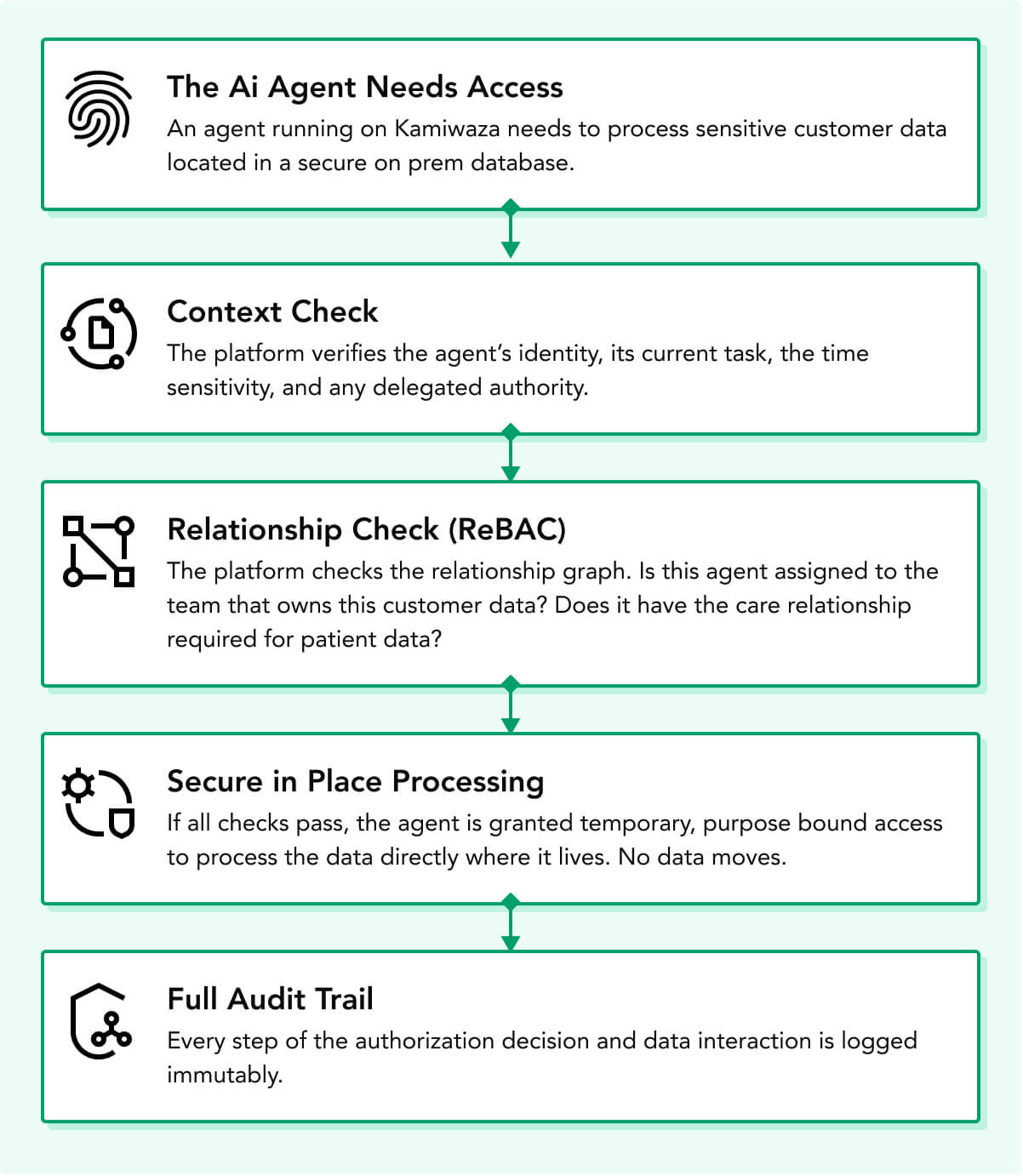

A single AI request may begin with a person, move through an application or agent, touch metadata and embeddings, execute on a model or peer node, and return an answer or action that must be governed before release. Every handoff creates a point of vulnerability.

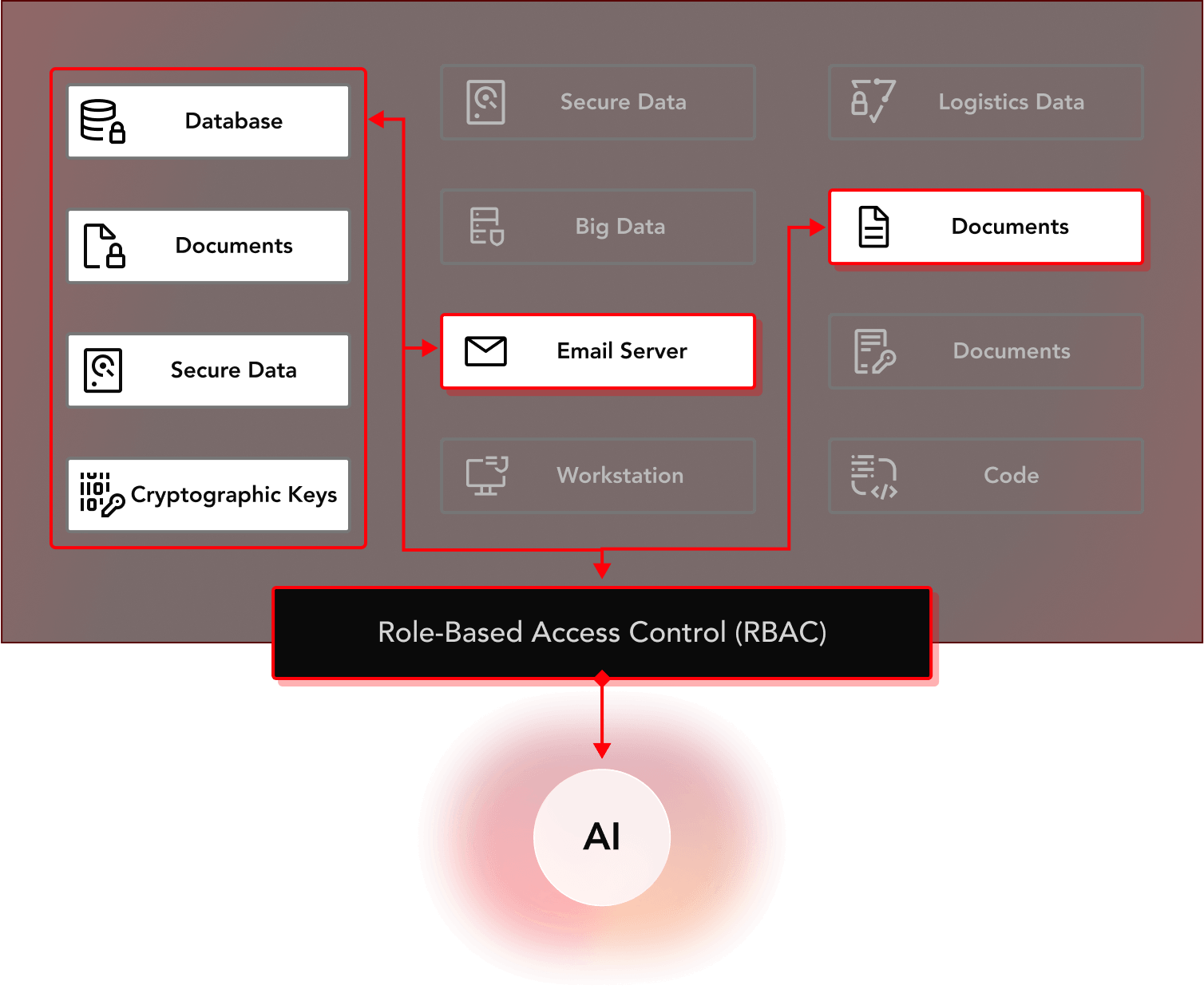

Traditional Access Controls Aren’t Enough for AI

Role-Based Access Control (RBAC) and Attribute-Based Access Control (ABAC) work when users access known applications through stable roles or attribute-based rules. They are less effective in AI environments, where workflows are dynamic and spread across multiple domains.

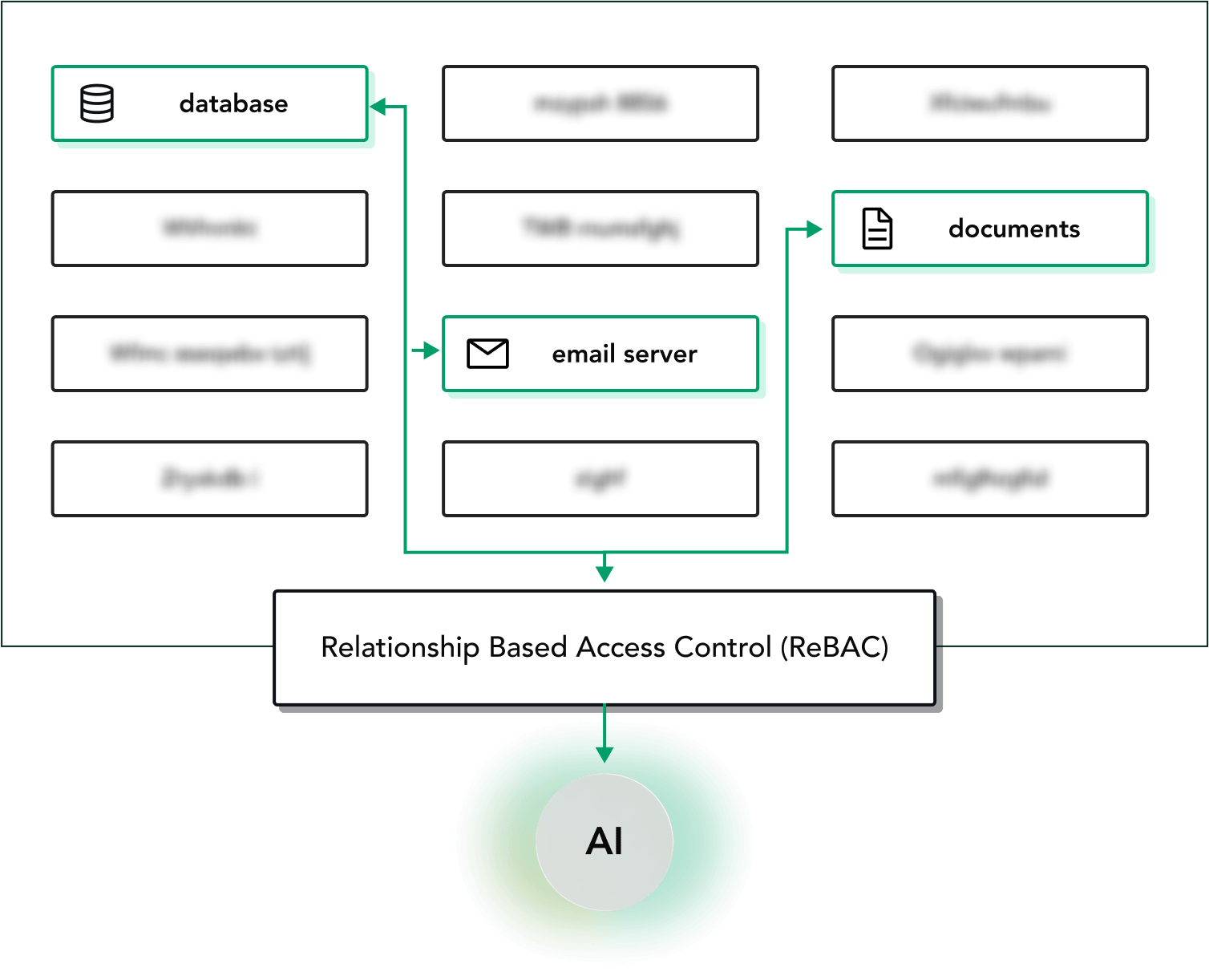

Access Should Consider Relationships

In agentic AI, the issue is not whether an identity matches a role or attribute. The real question is whether the current relationship between the actor, task, data, and organization supports access in that moment.

Roles and Attributes Can Change

An agent may be acting on behalf of a user for a specific project, under a limited scope, and against sensitive data that should not be exposed beyond that context. Static roles and attributes alone do not capture that level of complexity.